|

The lifting load, i.e., the weight of a lifted object, is an important factor for lifting exposure analysis and risk assessment. The revised NIOSH lifting equation (RNLE) (Waters et al., 1993) requires the input of lifting load to calculate the lifting index associated with lifting and lowering for preventing lower back injury. The recent advance of computer vision algorithms provides an automated approach for detecting subjects and tracking their body parts using lifting videos, demonstrating successful applications of predicting lifting postures (Greene et al., 2019) and estimating the hand and feet locations during lifting (Wang et al., 2019). The goal of this research is to explore using computer vision and deep learning algorithms for the automatic measurement of lifting load by analyzing body part movements extracted from a lifting video, without the need for stopping to weigh the object.

|

|

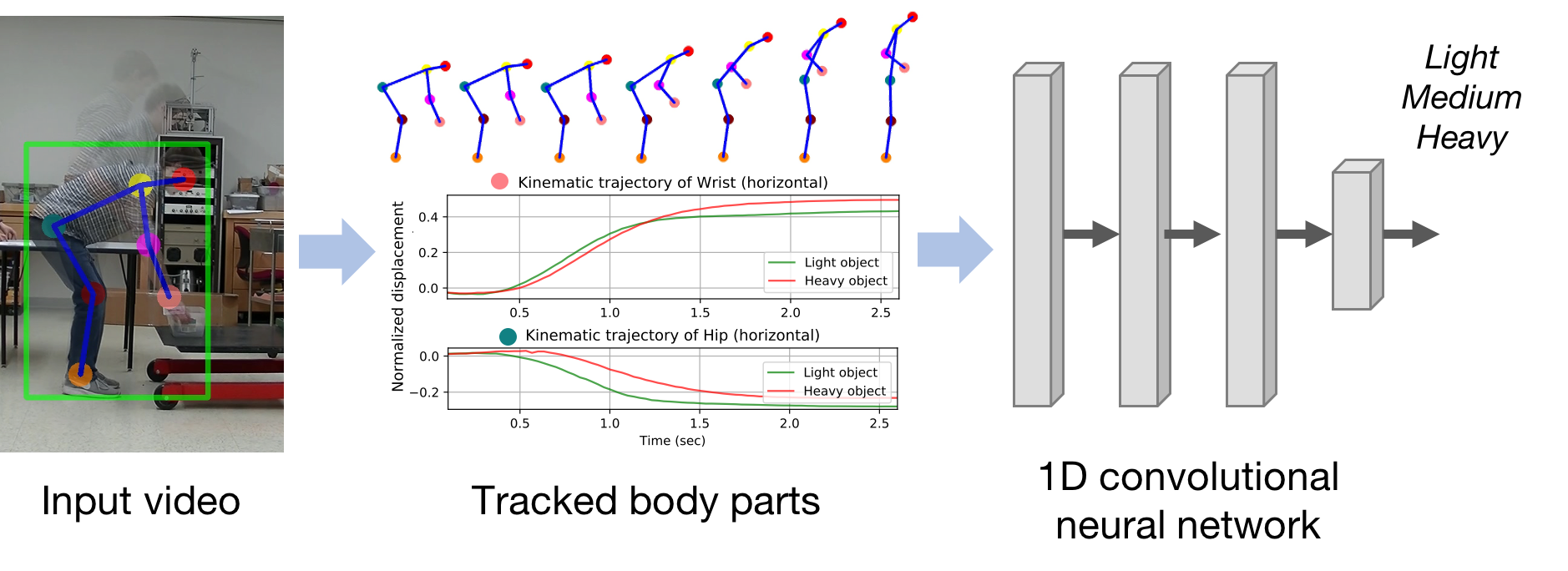

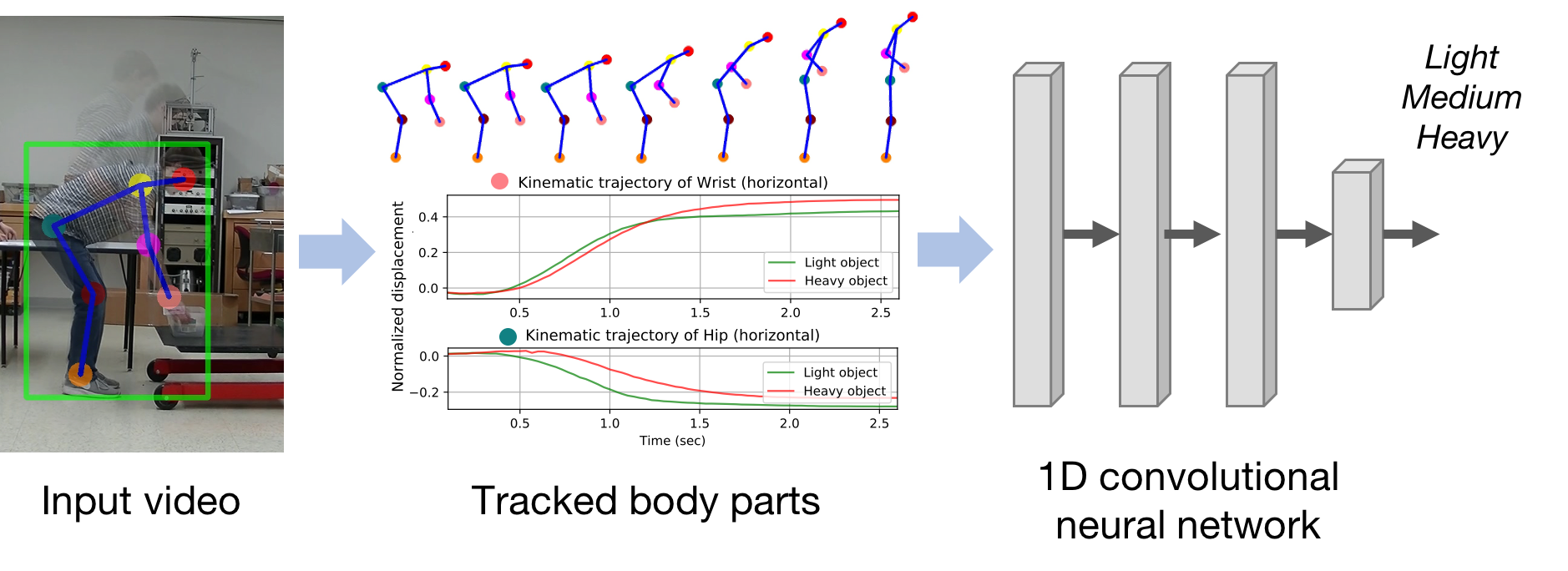

In this work, we present a first step towards automatic prediction of lifting load by analyzing human body movement extracted from lifting videos. A computer vision system is developed to track human body parts in an input video, followed by a deep neural network that analyzes the trajectory of body parts and predicts the weight of the lifted object. A user study of 19 participants is conducted to evaluate our method. The study demonstrates that our method achieves an accuracy of 68.9% to distinguish between light vs. heavy objects, and has an accuracy of 47.4% to identify three levels of lifting loads (light, medium, heavy). The implications of the results would suggest that video-based automatic analysis might be possible to estimate the exertion level during manual lifting---a major factor of workspace injury.

|